Self-driving RC Car

Robotics, Applied Machine Learning

I’ve built a self-driving RC car model that can:

- Autonomously switch lanes

- Track and detect objects

- Detect lanes and keep the car in them

- Use a simulator to rapidly prototype models and pre-train for real-world conditions

I also made a very detailed tutorial on how to do so:

It includes a lot of visual examples, from hardware building, kernel hacking to the entire code and development process.

Give it a look and let me know if you’d like to build your own! I’d be happy to help.

A quick, visual TL;DR:

How I built the hardware

There was a lot of parts and tools involved. Here’s a pic of the still-packaged hardware:

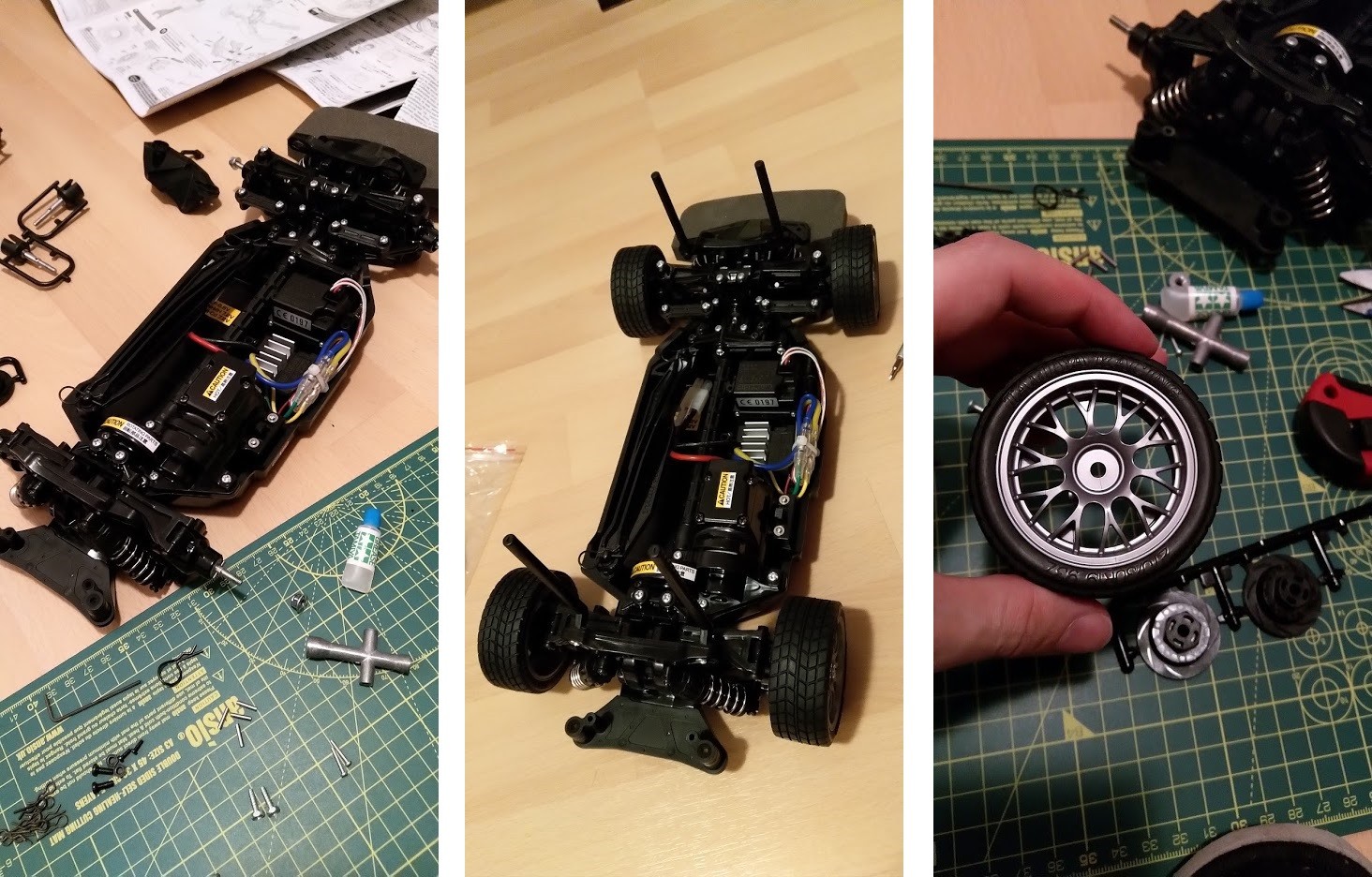

First, I had to assemble the RC car model:

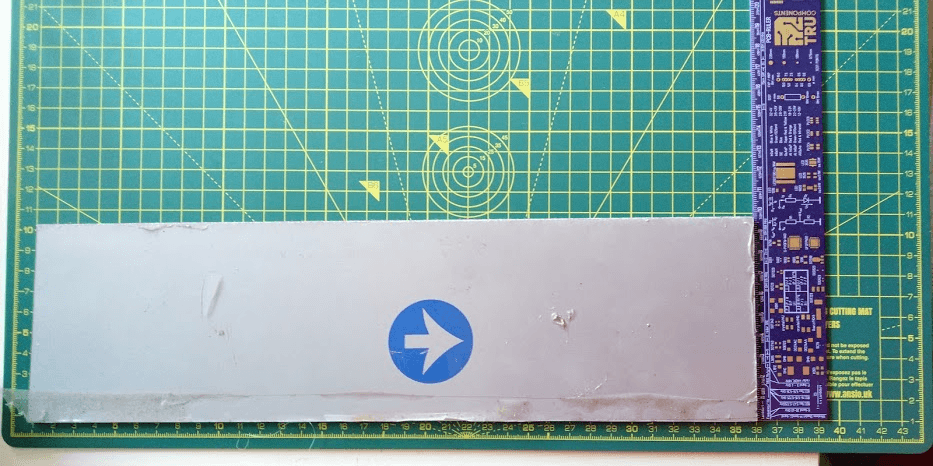

Then I had to figure out how to mount the rest of the “smarter” hardware on it, so I made a 3D model:

The first few iterations were 3D printed:

And the final tested design was built using aerospace aluminium:

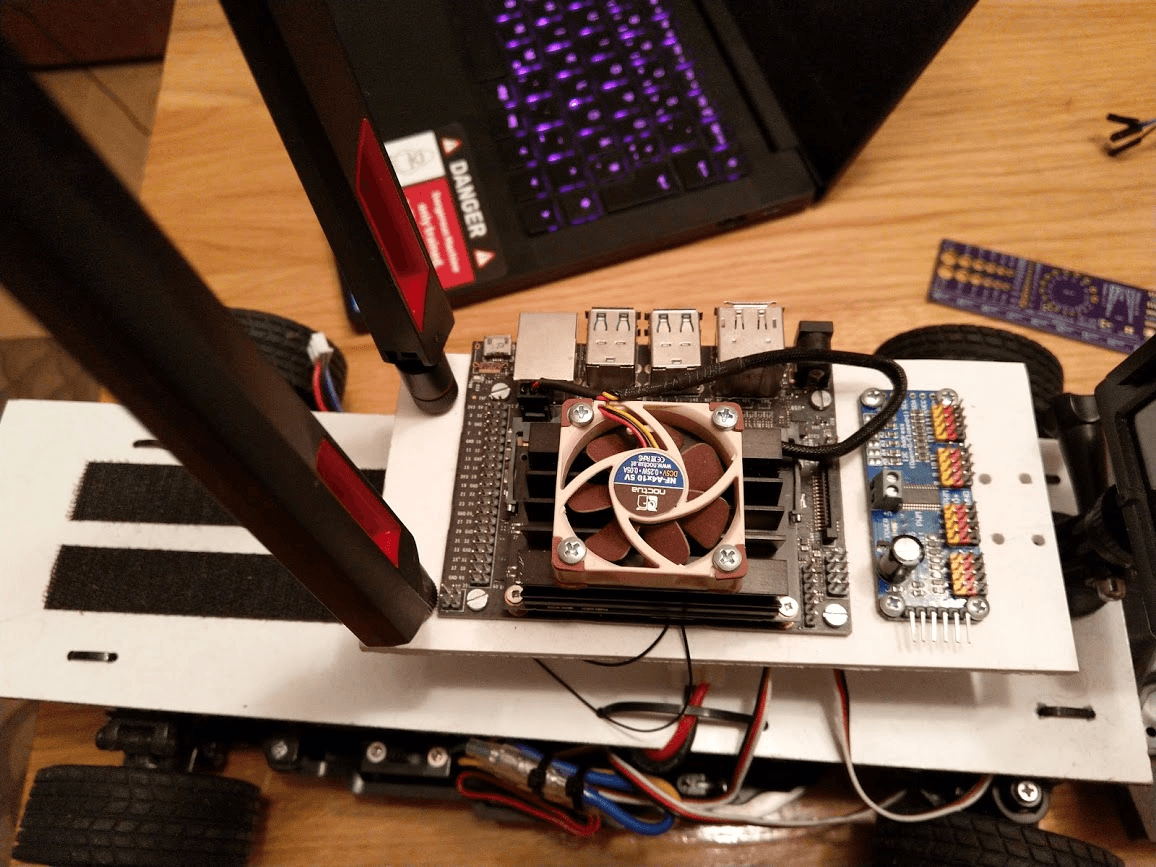

Then the Jetson Nano and the I²C/PWM Servo driver were mounted on the board:

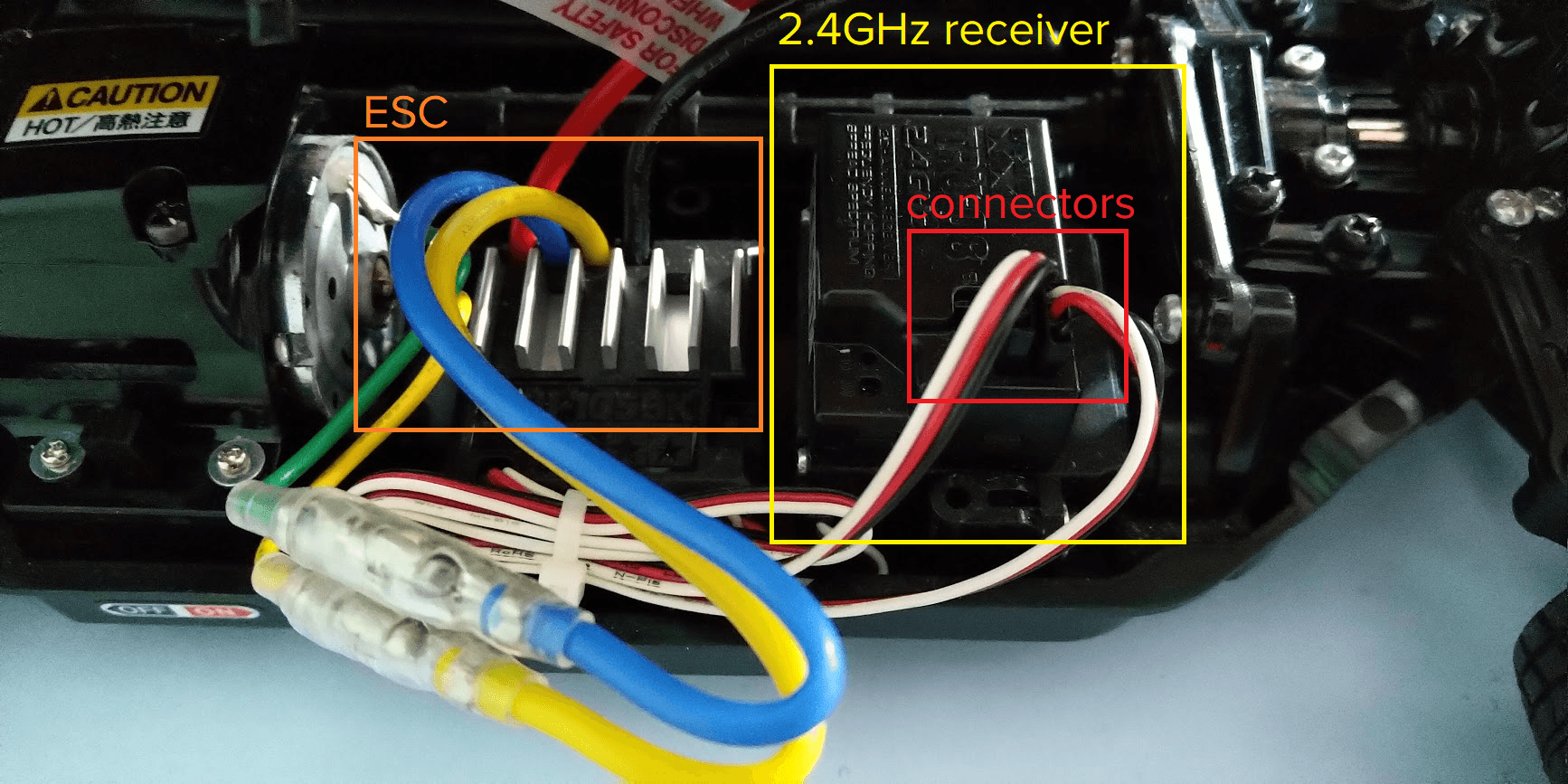

The RC car was interfaced with the Nano via the driver:

Which allows programming and controlling the RC through software!

How I built the software

The very high-level overview

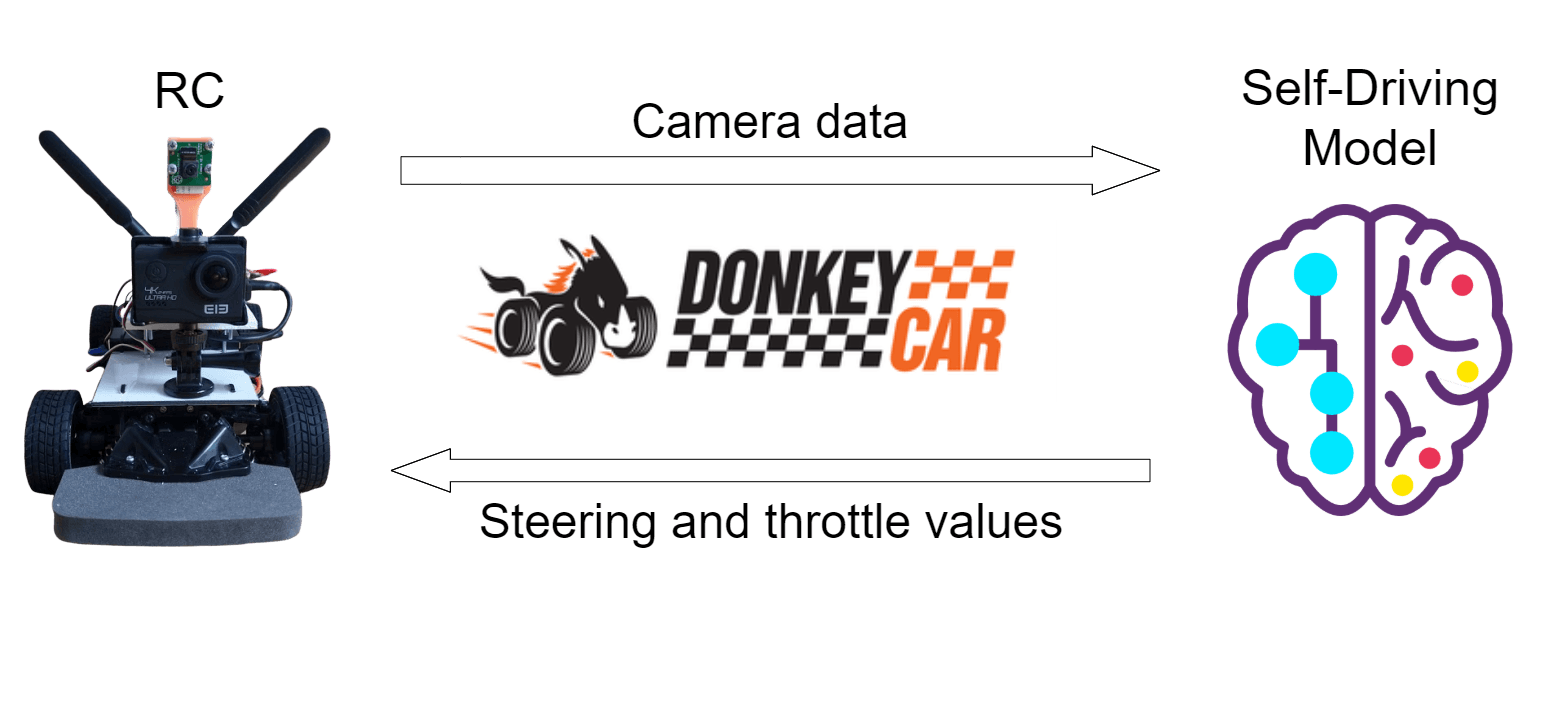

As shown above, the camera (and sensor) data is sent from the RC to a model which analyses it and tells the RC what it should do.

With Donkey, it’s easy to create an interface to:

- (Pre) Process the camera data

- Convert the steering/throttle values to actual signals for the RC to understand

- Actually steer the RC and increase/decrease throttle

- Collect and label training data

- Define and train custom models

- Control the car using a Web interface or a gamepad

- And even use a simulator to rapidly test and train models

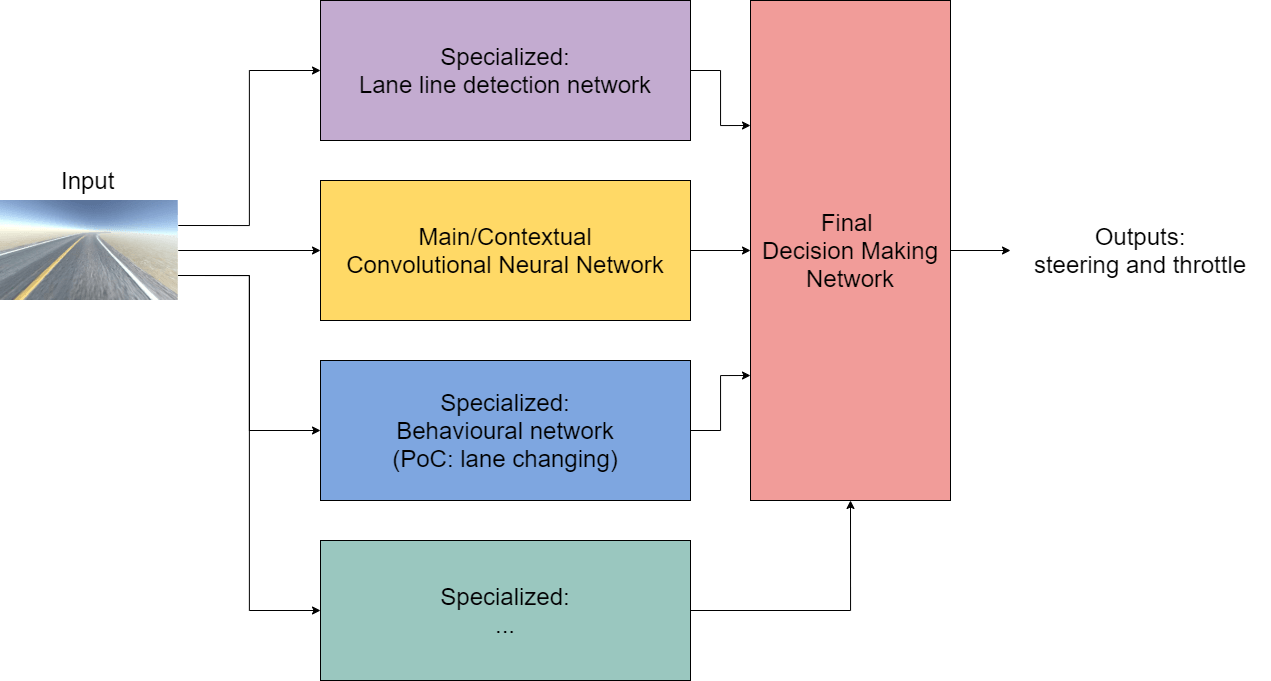

The final neural network architecture

I wanted to have a series of specialized subnetworks, which would take the input data and extract highly specific features from it, using (relatively) specialized procedures and plug them into the final layer, along with contextual data from the first convolutional network that uses the raw input image to give the final part of the network enough context about the world and enough information in order to appropriately control the RC.

It looks like this:

I was playing around with that idea in my mind when I saw Andrej Karpathy’s talk on PyTorch at Tesla, where he explained their use of HydraNets. In a nutshell, because they have a 1000 distinct output tensors (predictions) and all of them have to know a ton of context and details about the scene, they use a shared backbone.

They actually have 48 networks that output a total of 1000 predictions, which is insane to do in real-time (on 1000x1000 images and 8 cameras) while being accurate enough to actually drive living humans on real roads. Though, they do have some pretty sweet custom hardware (FSD2), unlike my Jetson Nano 😢.

Now, it obviously makes much more sense to do what Tesla did, to have a shared backbone since a lot of the information that the backbone extracts from the input images can be applied to all of the specialized tasks, so you don’t have to learn them all over again for each and every one of them.

But I figured, I’d like to try my idea out, which I did, since the main reason for doing this is learning to apply ML/DL to something I could actually see drive around my backyard, and when I taught the car to do behaviours like lane changing it actually worked!

All of the details and the entire implementation can be read at the project website (rccar.orsolic.tech).

Lessons learned and obstacles overcame

I’ve learned a lot about machine learning, and for me, perhaps the most valuable piece of the thesis is the “master recipe” I’ve written for myself on how to train a good ML model with all of the tricks I’ve learned along the way.

I’ve also had funnier moments like my drill dying on me while I was trying to drill through the mounting plates (aluminium), which forced me to use this bad boy and drill it by hand:

12/10 would never do that again.

Another curious moment was when I found out that one of my earlier models used the horizon as a waypoint where the RC car should be traveling to.

As far as I could gather, it was something similar to us humans traveling to a certain point, e.g. commuting to work. We know the general direction of our workplace and we start traveling towards it following any roads along the way.

The RC car did the same, with its final destination being the horizon on the looped track I was training it on, and the detected lane line telling it the “road” it should be taking on the way to it.

Here’s a visualization (saliency map) showing what I’m talking about. The visualization shows which pixels from the input images have the most effect on the direction and speed the car travels in.

The solution? Do a perspective transform and some preprocessing to extract the most important features (e.g. lanes).